Task

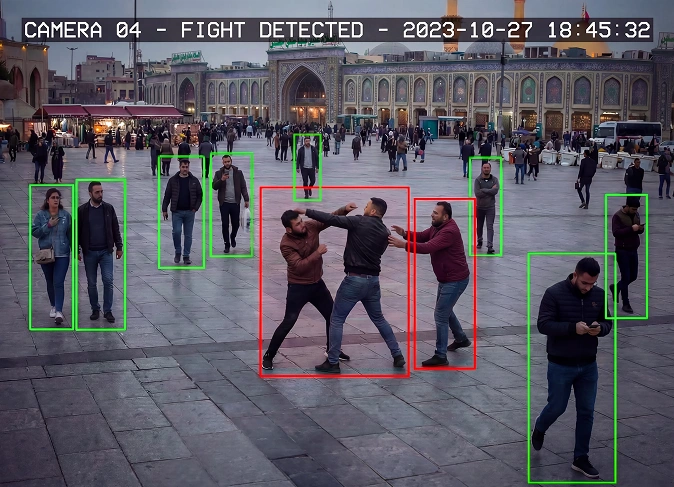

The client required high-quality, multi-angle video data for training emotion recognition models. Each participant had to perform scripted emotional expressions in English, recorded simultaneously from three camera angles to enable precise facial, micro-expression, and lip-sync analysis.

The project involved:

- Creating a custom multi-camera recording setup

- Ensuring frame-accurate synchronization

- Working with actors performing emotional scenarios

- Maintaining consistent visual quality across different recording periods

- Building a scalable and repeatable production pipeline suitable for AI training

Key challenges included:

- Technical synchronization across three cameras without frame drops or desynchronization

- Physical filming constraints, including heat, long sessions, and studio limitations

- Unclear acceptance criteria at early stages, requiring alignment with the client during production

- Actor selection and validation, including emotional accuracy and consistency

- Data rejection risks caused by lighting artifacts, facial occlusions, or sync issues

Solution

Technical setup optimization

After extensive testing, the team developed a stable and scalable setup using:

- Three professional-grade mobile cameras recording in 4K at 60 FPS

- A centralized camera control system for synchronized operation

- An additional mobile device used as a control hub to manage and monitor all cameras

This configuration delivered frame-accurate synchronization and eliminated previous stability issues.

Special credit goes to the engineering team for developing and refining this workflow from scratch.

Studio and production optimization

During the project, several filming locations were tested:

- professional sound studios

- coworking spaces adapted for filming

- a fully reconfigured internal studio space

To reduce costs and improve flexibility, the final stage was recorded in a customized in-house studio setup, allowing full control without rental expenses.

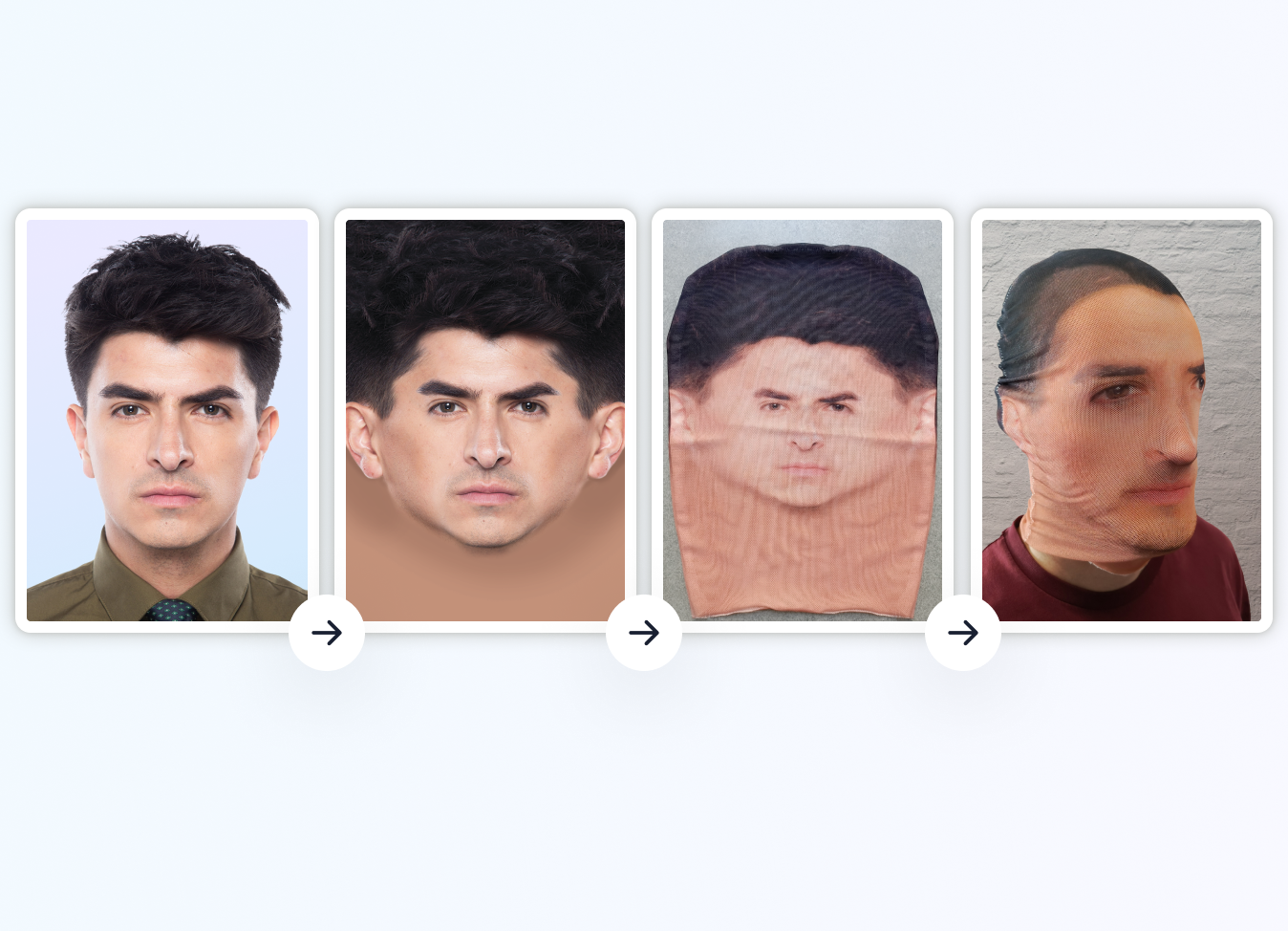

Actor validation and quality filtering

To minimize rejection rates, a multi-step validation process was introduced:

- Pre-screening via recorded self-introductions

- Live online validation sessions with real-time feedback

- Joint evaluation with the client before final approval

This approach significantly reduced the risk of unusable data and improved alignment with client expectations.

Quality control & data validation

A multi-layer QC process was implemented:

- Verification of facial visibility (no glasses glare or occlusions)

- Synchronization checks across all camera angles

- Validation of emotional expressiveness and timing

- Consistent file naming and metadata alignment

Result

- Designed and deployed a stable multi-camera capture system for high-precision data collection

- Built a centralized control workflow enabling real-time recording, synchronization, and quality monitoring

- Successfully recorded 47 identity sessions under production conditions