Data Annotation and Labeling Services for ML

Data annotation is labeling raw data with tags or metadata, making it interpretable for ML algorithms. Unidata provides expert annotation services that turn raw data into accurate training datasets, using trained human annotators and advanced tooling to improve model performance across industries.

- 95%+ annotation accuracy

- 1,000+ domain-matched annotators

- Pilot launched within days

Data Annotation Vs Labeling Tasks

| Data Annotation | Data Labeling | |

|---|---|---|

| Definition | Comprehensive process of adding metadata, tags, and structural information to raw data for machine learning comprehension | Specific task of assigning target labels or classes to data points for supervised learning |

| Work Coverage | Holistic: includes labeling, segmentation, bounding, transcription, relationship mapping, and metadata enrichment | Narrower: primarily classification or regression target assignment |

| Common Tasks |

|

|

| Complexity Level | High complexity: requires domain expertise, spatial-temporal reasoning, and understanding of relationships | Low to medium complexity: primarily follows straightforward guidelines with binary or categorical decisions |

| ML Impact | Enables advanced models: object detection, semantic segmentation, pose estimation, action recognition, relationship learning | Enables fundamental models: classification, regression, basic recognition, content filtering |

Data Annotation Types

Software We Use for Data Annotation Services

How Unidata Provide Data Labelling Process

A Clear, Controlled Workflow From Brief to Delivery

- You

- Share your raw data, annotation requirements, and quality standards

- Unidata

- We analyze your data, define the methodology, and assign a dedicated project lead. The right annotation type and domain-matched annotators are confirmed before anything starts.

- You

- Review annotated samples, validate quality, and approve scope before full-scale work begins.

- Unidata

- We annotate a small representative sample and deliver a clear cost estimate broken down by complexity, hours, and validation rounds.

- You

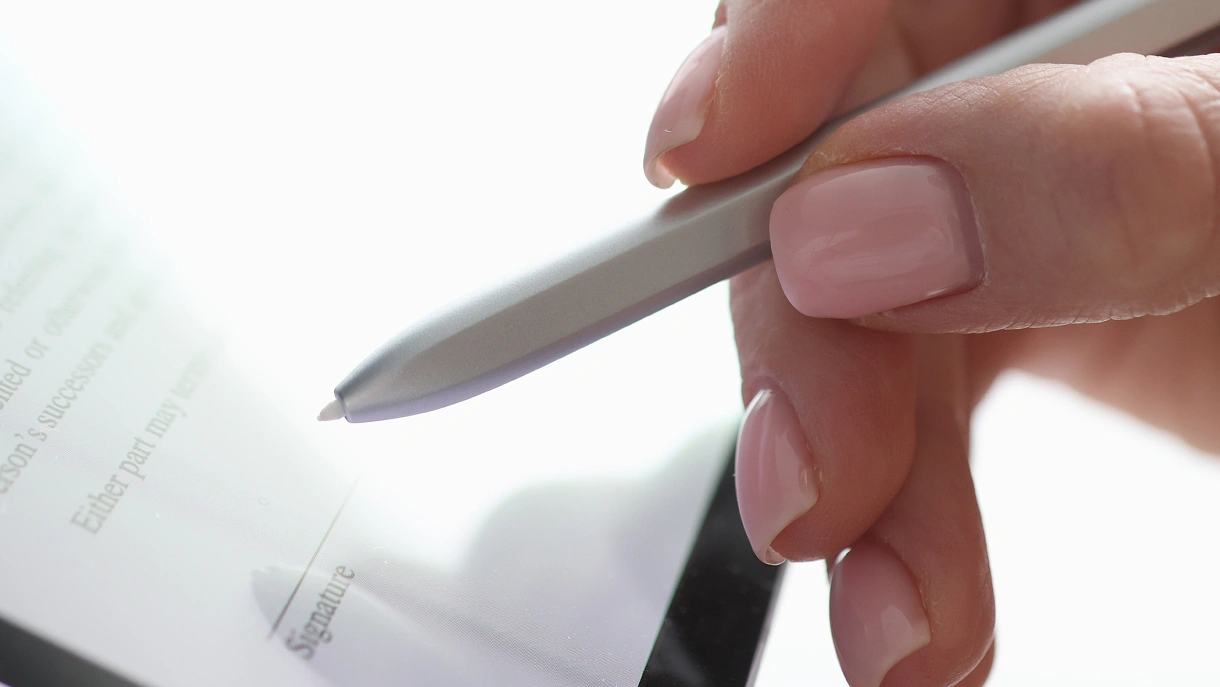

- Review and sign. Scope, quality thresholds, and deadlines are all defined in writing upfront.

- Unidata

- We prepare a full confidentiality agreement covering your data, guidelines, and any proprietary model details.

- You

- Share existing guidelines and format requirements. No guidelines yet? We build them together.

- Unidata

- We configure the right annotation platform for your data type: Labelbox, SuperAnnotate, CVAT, or Label Studio. Workflows, label taxonomy, and quality benchmarks are set before a single label is applied.

- You

- Review sample batches at each milestone and share feedback with your project lead.

- Unidata

- Trained, domain-matched annotators work through your dataset. No batch moves forward without passing internal quality checks.

- You

- Review edge cases and confirm acceptance criteria before final delivery.

- Unidata

- Every batch goes through automated validation and human review. Inter-annotator agreement (IAA) is tracked throughout. Inconsistencies are caught and resolved before the dataset moves forward.

- You

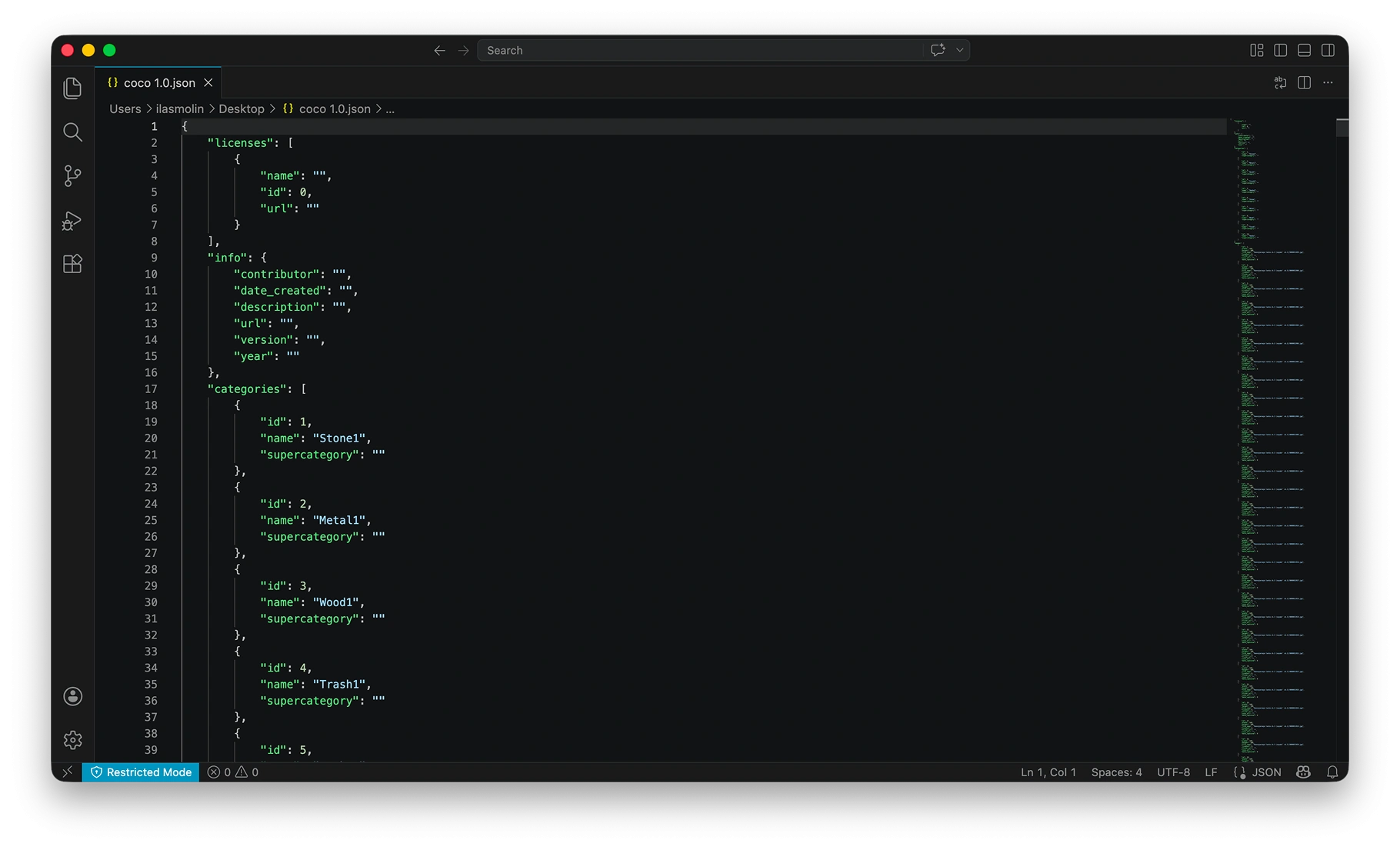

- Receive your annotated dataset in the format you need: COCO, Pascal VOC, JSON, CoNLL, PCD, or custom. Full quality report included.

- Unidata

- Clean, validated, training-ready data delivered on schedule. Final invoice aligned to the scope agreed at Step 02.

Have questions about the process? Every project starts with a free consultation — no commitment required.

Data Annotation Challenges? Value You Get with Unidata

Real Challenges

- No annotators, tools, or workflow to process collected data

- No quality check on labeled data before it hits the pipeline

- No way to ensure two annotators label the same object consistently

- Can’t find annotators with LiDAR, medical, or financial expertise

- Scope creep and rework cycles exhaust the budget before delivery

Value with Unidata

- Project lead assigned and pilot launched within days

- Every batch validated before delivery, 95%+ accuracy via multi-stage QA

- Label consistency tracked per batch, issues caught before training fails

- 1,000+ annotators matched by domain — the right expert, every time

- Pilot-first pricing, fixed scope, zero hidden rework charges

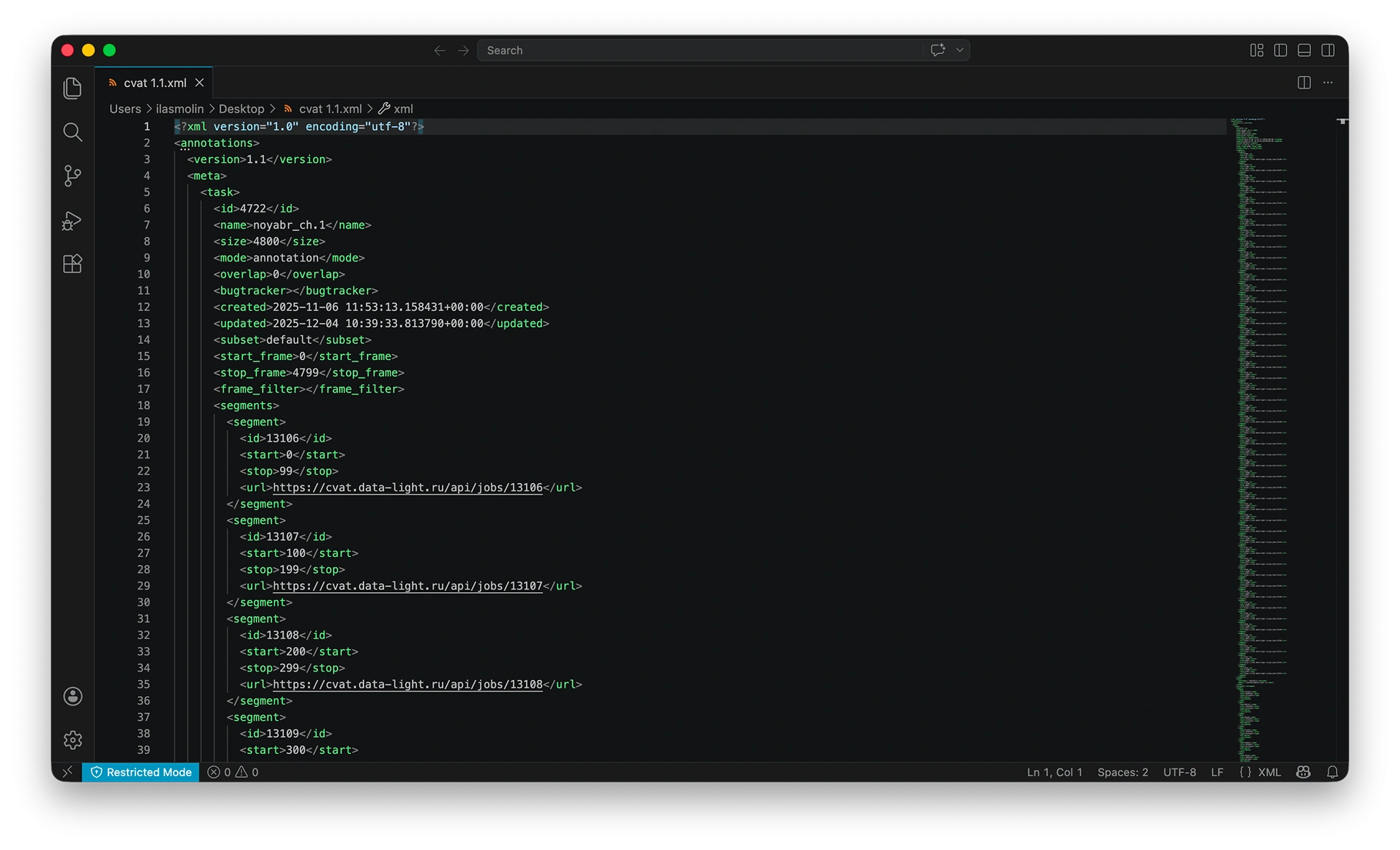

Data Annotation Files Example

Working with annotation data from CVAT and JSON formats, you'll receive optimized code that seamlessly processes both file types, complete with practical examples and visual representations of your data structure.

Other Services

What our clients are saying

UniData

FAQ

Industries

Why Companies Trust Unidata’s Services for ML/AI

Share your project requirements, we handle the rest. Every service is tailored, executed, and compliance-ready, so you can focus on strategy and growth, not operations.

Ready to get started?

Tell us what you need — we’ll reply within 24h with a free estimate

- Andrew

- Head of Client Success

— I'll guide you through every step, from your first

message to full project delivery

Thank you for your

message

We use cookies to enhance your experience, personalize content, ads, and analyze traffic. By clicking 'Accept All', you agree to our Cookie Policy.