The Task

A telecom client needed Arabic language data to validate internal AI tools.

Arabic is not a single operating language. Dialects vary so strongly that speakers from different regions may struggle to understand each other. At the same time, the client needed consistent, comparable results across tasks.

The scope included three parallel challenges:

- Verbatim transcription of Arabic audio with background noise, overlaps, laughter, and interruptions

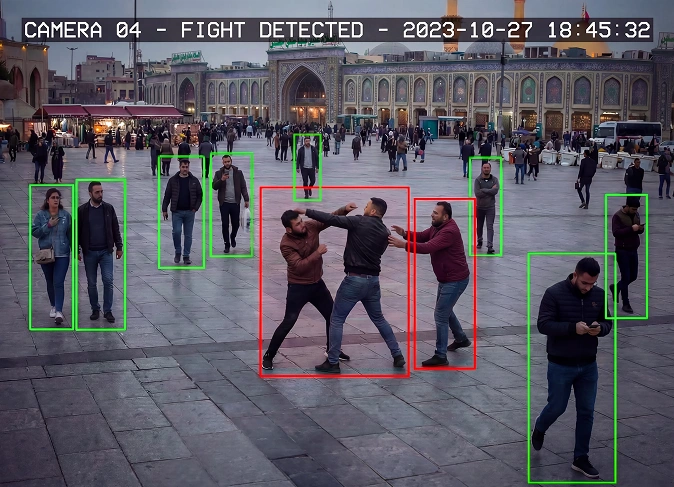

- Evaluation of audio recordings after noise suppression, including safety assessment

- Linguistic evaluation of LLM generated Arabic texts based on a prompt and summary

Each task required native speakers. Some required dialect precision. All required strict linguistic judgment.

The Solution

Task Structuring

We separated this task into three independent pipelines:

- Speech transcription with explicit rules for non speech events

- Audio quality and safety evaluation with clear scoring logic

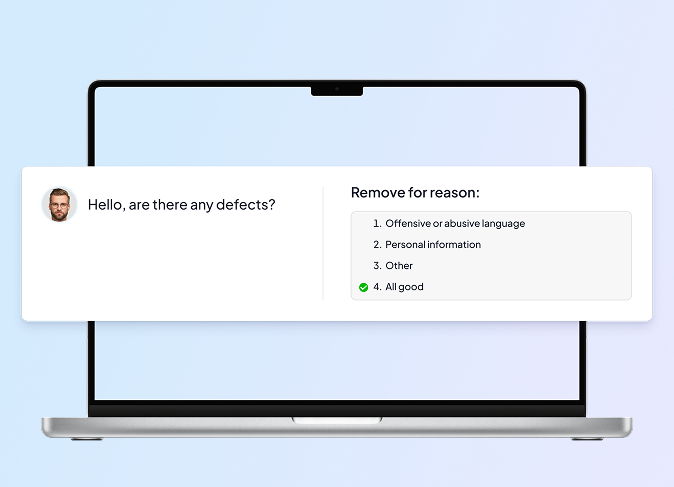

- LLM output evaluation with linguistic and semantic criteria

Each pipeline had its own guideline, examples, and quality signals. This avoided confusion and reduced subjective interpretation.

Dialect Mapping

Arabic is not a single working language, dialect differences are critical. That's why we worked with:

- Gulf dialects, including UAE and Saudi Arabia

- North African dialects, including Morocco and Algeria

We accounted for real linguistic behavior:

- English loanwords common in Gulf speech

- French insertions typical for North Africa

- Strong phonetic and lexical differences between regions

Annotators were matched to tasks strictly by dialect.

Annotator Sourcing

To control quality, we avoided mass recruitment. We quickly identified a common issue. Regional presence did not guarantee native language competence.

That’s why we:

- Sourced annotators manually via targeted LinkedIn search

- Validated native proficiency through test tasks, not profiles

- Required English for operational communication

- Matched annotators to tasks strictly by dialect

A recurring issue was false positives. People living in Arabic speaking countries but not native speakers. This was filtered out at the test stage. The final team was lean, predictable, and scalable.

Training and Calibration

Training was built around ambiguity, not theory.

- Test tasks revealed differences in how annotators interpreted transcription rules

- Feedback cycles aligned expectations quickly

- Special attention was given to LLM poetry evaluation, where grammar, logic, style, and prompt alignment all mattered

Annotators were trained to justify decisions, not just select labels.

In-Process Validation

Quality was monitored in real time.

- Ongoing reviews during production

- Immediate feedback on deviations

- Early detection of misunderstanding before it scaled

This minimized rework and protected timelines.

| Stage | Input | Workflow Scope | Main Quality Checks |

|---|---|---|---|

| Project Setup | Client brief, LLM tasks, audio recordings | Guideline development for transcription, evaluation, safety scoring | Clarity, reproducibility, task separation |

| Annotator Sourcing | Candidate profiles, LinkedIn search | Dialect-specific selection, native proficiency validation | Dialect accuracy / native-level competence |

| Training & Calibration | Test tasks, sample audio/text | Ambiguity resolution, feedback loops, justification of decisions | Annotation consistency / guideline adherence |

| Transcription | Audio recordings (noisy, overlapping) | Verbatim transcription, marking non-speech events | Correctness, completeness, noise handling |

| Audio & Safety Evaluation | Cleaned audio | Scoring for clarity, safety, linguistic behavior | Accuracy, reliability across dialects |

| LLM Output Evaluation | Arabic text outputs | Linguistic and semantic assessment, style & prompt alignment | Grammar, logic, semantic correctness |

| In-Process Validation | Annotated batches | Ongoing QA, real-time feedback | Early error detection / rework minimization |

| Final Delivery | Validated audio & text datasets | Dataset packaging, client handoff | Cross-dialect consistency, framework usability |

The Results

- A reusable Arabic annotation framework across speech and LLM tasks

- Stable performance across multiple dialects

- Consistent quality despite linguistic complexity

You can’t treat Arabic as a single language. High-quality annotations require careful dialect selection, clear rules, and constant calibration.

- Albina Romanova

- Speech and Generative Data Group Manager