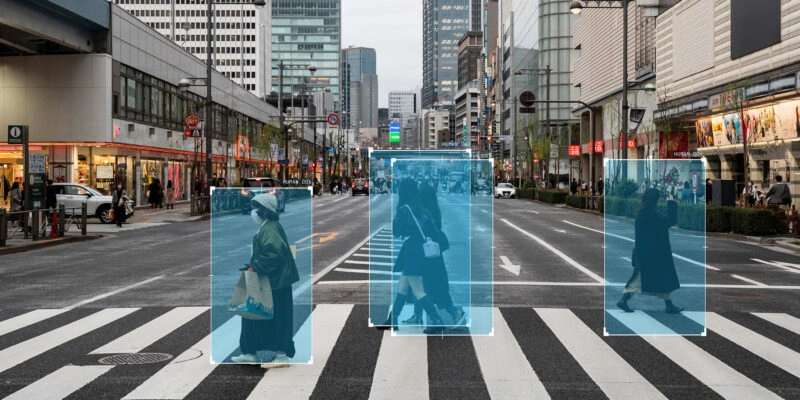

Data lineage in ML means tracing your data’s origin, its changes, and its full journey across tools and systems. It tells the story behind each data element. That lets you answer questions like: Which data sources feed this model? Which business users rely on the output? What happens if a source schema changes?

Tracking data provenance boosts data quality, supports compliance, reduces risk, and makes root cause analysis faster. This article unpacks data lineage for machine-learning professionals in a playful, expert voice — because clarity shouldn’t be boring.

Why You Should Care About Data Lineage

Clarity in an Ever‑Changing Data Environment

The world of big data and ML runs on constant streams of information. Without a clear map, your data pipelines can look like a tangled forest. Data lineage acts like a GPS. It shows you where data originates, tracks data changes, and explains every data transformation before you use that data to train models.

When you know the lineage, you can quickly check assumptions. You can also fix data quality issues faster.

Analysts explain that lineage supports fast root cause analysis and impact analysis. It also plays a major role in active metadata management. In short, the industry sees tracking data flows as a must-have for reliable ML.

Trust and Transparency for Business Users

Today’s enterprises need to share data access. That way, both business users and data scientists can trust what they see.

Data lineage brings transparency. When teams can see where the data originates and how it changes, they gain confidence. That trust helps them use the data in decisions. It also improves data governance. Lineage helps apply governance policies and protect sensitive data.

Faster Root Cause and Impact Analysis

Data systems can break in strange ways. You might notice a sudden spike in model error. Was it caused by a new data source? Or maybe a schema change? Data lineage gives you a real-time map to debug the issue.

Impact analysis gets easier, too. Lineage maps show downstream links. That means you can see which reports or ML features will break if something upstream changes. Less downtime means smoother ML workflows and more productive teams.

Compliance, Governance and Risk Management

Rules like GDPR, HIPAA, SOX, and BCBS 239 demand clear data handling practices. Data lineage supports regulatory compliance. It does this by tracing risk data back to the right data sources. It also helps check for data accuracy and timeliness.

Tracking data lineage also helps spot sensitive data, apply retention policies, and respond faster to data subject requests.

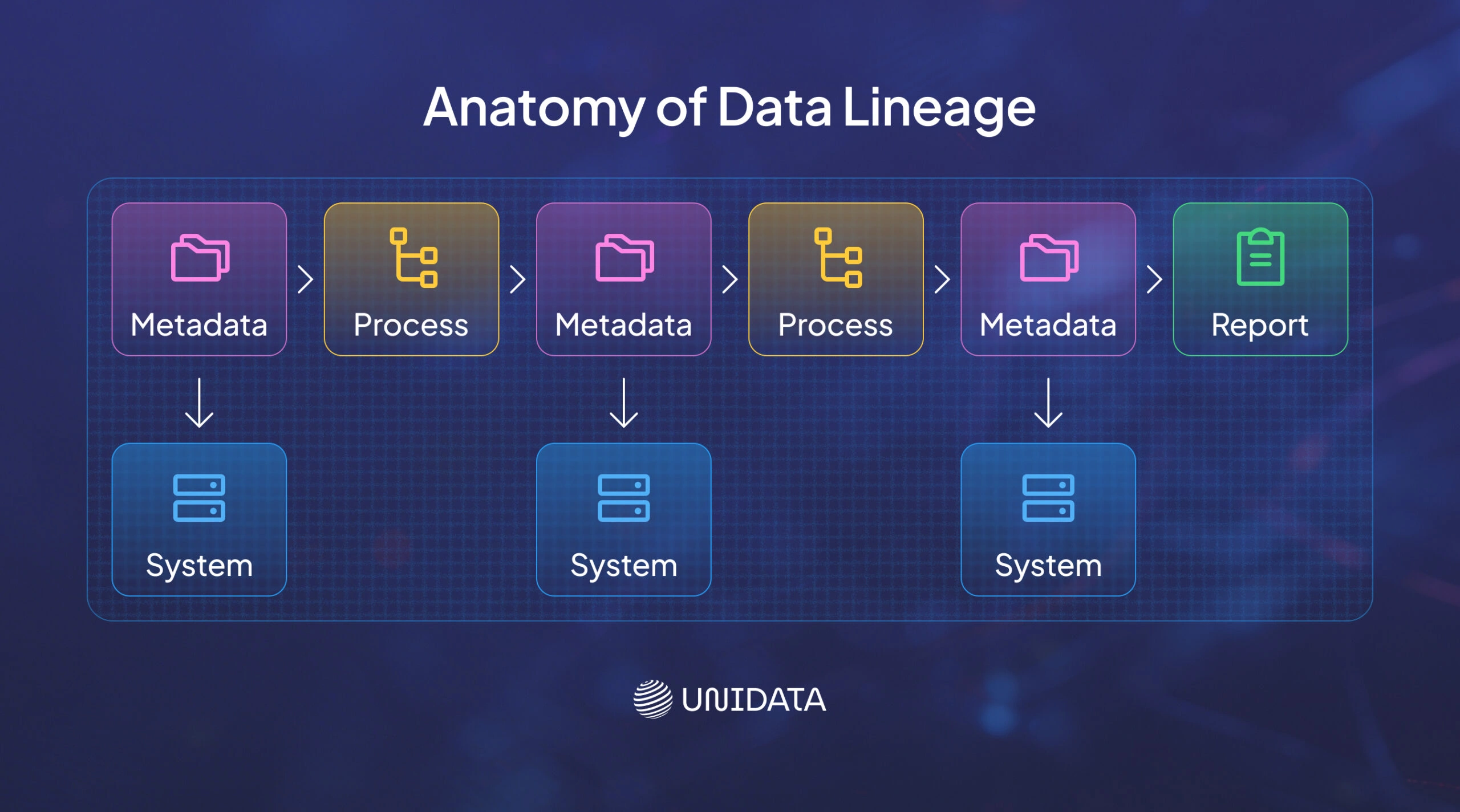

Anatomy of Data Lineage

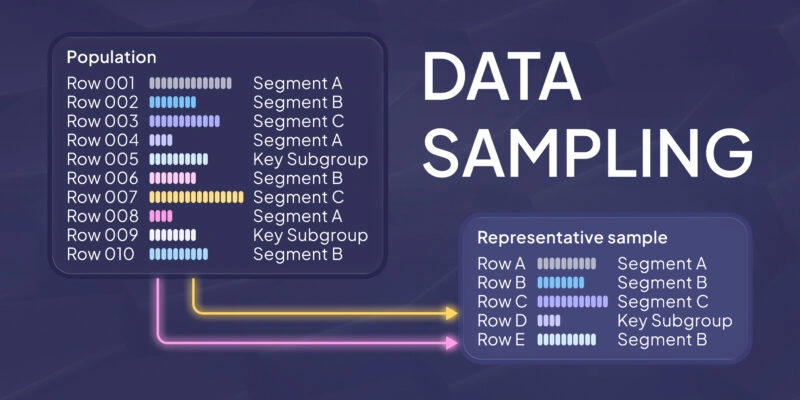

Data Sources and Data Elements

Data lineage starts with your data sources. These might be transactional systems, logs, CRM platforms, sensors, or public datasets. Each data element is like an ingredient in a recipe. You need to know where it came from, when it was pulled, and what it means in a business context.

A strong data catalog holds metadata that describes these elements. It includes details like data quality scores and data owners. This metadata also helps you classify data as structured, semi‑structured, or unstructured.

Data Pipelines and Transformations

After extraction, data moves through data pipelines. At each step, it’s transformed — filtered, cleaned, normalized, aggregated, or engineered into features.

Data lineage tools record every transformation and the logic behind it. Lineage maps the full journey — from the data source to the final stop. Knowing each step is key for analytics work.

Without this map, data transformations turn into a black box. That makes it hard to explain model outputs to business users or regulators.

Data Storage and Processing Systems

Eventually, data lands in a data lake, data warehouse, operational store, or an ML feature store. Each system adds complexity. A small change in a warehouse table might break dozens of dashboards and models.

Data lineage links all these systems. It shows where the data sits, how it's partitioned, and which dataset versions exist. It also describes the data processing setup — cluster settings, compute use, and third-party services.

Data Usage and Outputs

At the end, the data is used by data analysts, data scientists, or downstream apps. ML models, dashboards, business reports, and self-service tools all depend on clean data.

Lineage diagrams reveal which model uses which dataset. That lets you trace outputs back to the source. This end-to-end traceability is vital for root cause analysis, data provenance, and trust. It also helps assess how data changes affect business results.

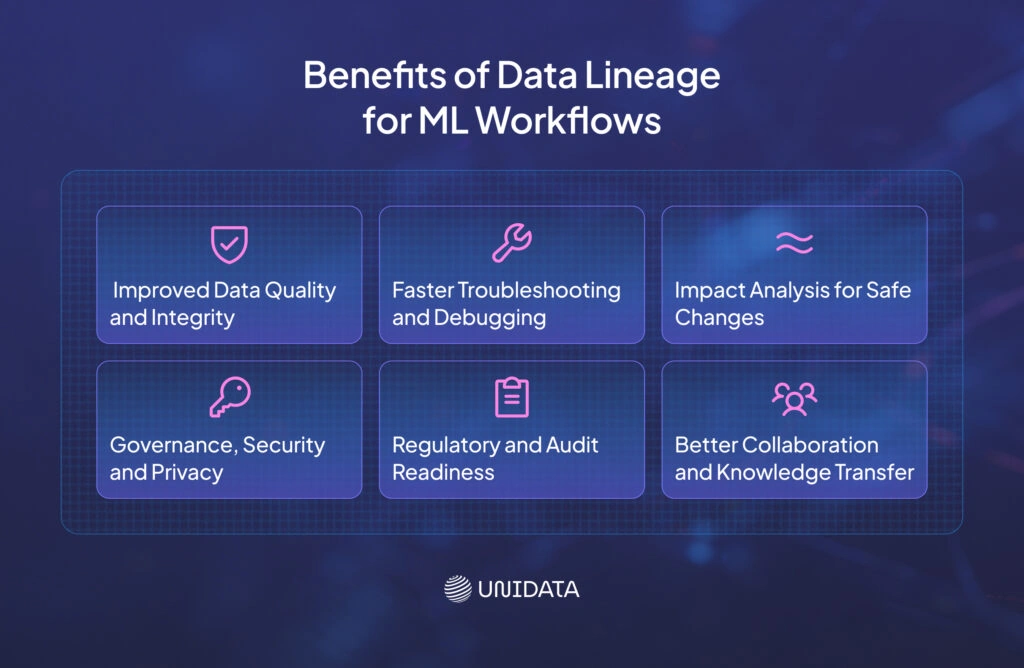

Benefits of Data Lineage for ML Workflows

Improved Data Quality and Integrity

Clean data leads to better models. Data lineage adds transparency that helps teams spot problems fast. When you trace each data field, you can catch duplicates, missing values, or inconsistent units — before they reach your training set.

Faster Troubleshooting and Debugging

Data downtime — when data is missing, wrong, or late — is a major issue for data engineers and data scientists. Lineage diagrams work like maps. They cut the guesswork when something fails.

Data lineage helps teams trace failures to their source and fix them faster.

Impact Analysis for Safe Changes

Before you change a pipeline, dataset, or transformation, you need to see the ripple effects. Data lineage shows which models, reports, or systems rely on that data.

This visibility lets you plan updates safely. You can assign the right teams and avoid breaking business-critical tools.

Governance, Security and Privacy

Data lineage makes data governance stronger. When you know where sensitive data lives and how it moves, you can apply proper access rules. That might mean encryption, masking, or anonymization.

Lineage boosts compliance and risk management by tracking data changes and spotting which assets are regulated. It also improves trust and cuts costs by giving teams clean audit trails.

Regulatory and Audit Readiness

Auditors want proof. They expect to see where your data came from and what changed. Data lineage offers a full record of source, transformations, and who accessed it.

This helps with audits, saves time on documentation, and proves your policies match the rules.

Better Collaboration and Knowledge Transfer

Data lineage helps teams work better together. Its diagrams act like living docs — they improve knowledge transfer.

Business users understand how the data works. Data engineers can share logic with analysts. Data stewards can manage the lifecycle of key data assets. That breaks down silos and speeds up progress.

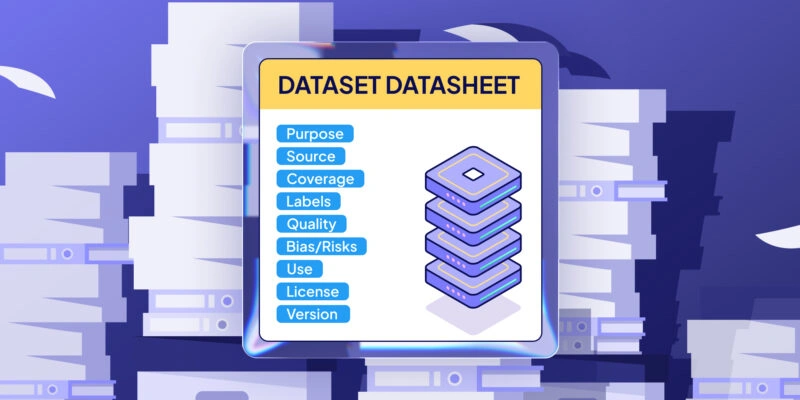

Enhancing Data Discovery and Cataloging

Your data catalog is the front door to your data assets. Data lineage adds the backstory — where data came from, who owns it, and how it was built.

Catalogs with lineage help enforce security and compliance by making audit trails clear. With this context, you can find the right dataset fast, spot unused ones, and reduce duplication.

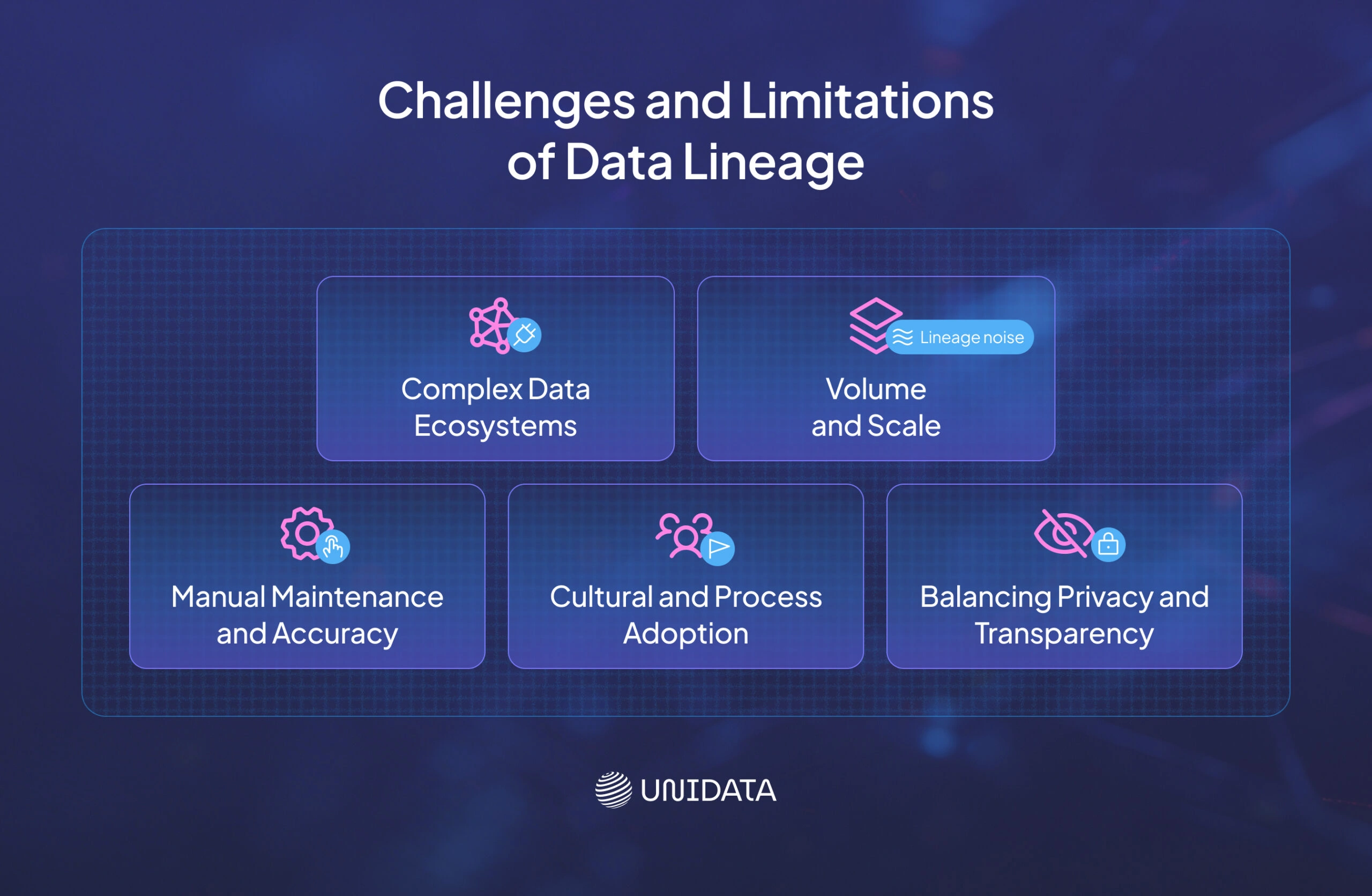

Challenges and Limitations of Data Lineage

Complex Data Ecosystems

Modern data environments are packed with systems — databases, data lakes, streams, APIs, and third-party tools. Pulling these into one data lineage view isn’t easy. Mixing so many tools creates serious complexity.

Often, data engineers must build custom connectors. Some systems don’t expose enough metadata for automatic tracking. That slows things down.

Volume and Scale

With big data comes big lineage. High-volume pipelines can generate thousands of nodes and edges. This is called “lineage noise” and it’s easy to get lost in the clutter.

Without filtering, users can’t find what matters. Good tools let you zoom in on what’s relevant. They help collapse extra steps and show the most critical paths.

Manual Maintenance and Accuracy

You can’t automate everything. Some steps — like manual tasks, ad-hoc scripts, or custom logic — don’t get captured. That leaves gaps. If a lineage diagram depends only on humans, it can go stale fast.

These manual updates age quickly. The best approach? Automate what you can. Then let users add context, notes, and data quality scores where needed.

Cultural and Process Adoption

Data lineage isn’t just a tooling issue — it’s a people problem too. Teams need the right governance habits. That means naming data stewards, setting clear rules, and writing things down.

Without strong support from leadership and daily use by data teams, diagrams won’t help. They’ll sit ignored. Adoption grows when you train users, share wins, and pick tools that add business context out of the box.

Balancing Privacy and Transparency

Lineage boosts clarity — but too much detail can expose sensitive data. Showing where personal or private fields move could create risks.

The fix? Use redaction, access controls, and column-level masking. Anonymize where needed. Good data lineage tools offer role-based views that protect what must stay hidden.

Data Lineage Tools and Platforms

Commercial Tools

- Collibra: Collibra Data Lineage integrates with their data catalog to automatically map data flows, detect changes, and visualize dependencies. It supports column‑level lineage, impact analysis, and governance dashboards. Collibra’s case study demonstrates significant reductions in regulatory risk and faster resolution of data quality issues .

- Atlan: Atlan provides a modern data workspace with active metadata, automated lineage discovery, and collaborative cataloging. It emphasises integration with third‑party systems and manual enrichment to ensure completeness. Atlan champions “active metadata” where lineage continuously feeds into your operations.

- Alation: Alation’s data intelligence platform includes automated lineage capture, stewardship tools, and policy management. It positions lineage as a business imperative, highlighting that incomplete lineage can lead to poor decisions.

- MANTA: MANTA offers automated lineage across databases, ETL tools, and BI platforms. It specialises in complex, cross‑technology environments and provides root cause and impact analysis for data engineers.

Open Source and Community Tools

- OpenLineage: Developed by Datakin (now a part of Astronomer), OpenLineage provides a standard for collecting lineage events from data processing tools. It supports integration with Apache Airflow, dbt, Spark, and other big data frameworks. You can send lineage events to a backend and visualize them with an open‑source interface or integrate them into existing catalogs. OpenLineage fosters community collaboration and vendor‑neutral lineage.

- Marquez: Marquez is an open‑source metadata service that implements the OpenLineage specification. It stores metadata about jobs, datasets, and runs, enabling you to explore lineage, track data quality, and monitor pipeline runs. Marquez can be integrated into ML pipelines for feature tracking.

- OpenMetadata: This open‑source platform integrates data discovery, quality, profiling, and lineage. It uses connectors for different data systems, making it easy to adopt in ML environments.

- dbt (Data Build Tool): dbt is primarily a transformation tool for analytics engineering, but its dbt docs feature generates column‑level lineage for transformations defined in SQL. It integrates with BI tools and can be combined with OpenLineage to create more holistic lineage.

Practical Example: Atlan and OpenLineage in an ML Workflow

Suppose you’re building a churn‑prediction model. Your data originates from a CRM system, usage logs, and payment history. You process it using Apache Airflow to orchestrate daily ETL jobs. You use dbt to transform raw data into a unified customer table, and you store features in a feature store that feeds into your ML pipeline.

You can install OpenLineage in your Airflow environment. Each Airflow task emits lineage events describing inputs, outputs, run times, and job metadata. dbt also publishes lineage metadata for transformations. Atlan ingests these events into its catalog. The lineage graph in Atlan shows that the customer_features table in the feature store originates from the salesforce_contacts table and the app_usage_logs. You also see that a particular dbt model calculate_lifetime_value depends on these sources and feeds your ML model. When a data quality issue occurs, you can trace it back to the problematic source table and fix it before retraining. This integration demonstrates how automated data lineage supports ML workflows, reduces debugging time, and ensures trust in model predictions.

Practical Example: Collibra in a Banking Compliance Pipeline

A bank deploying credit‑risk models must comply with BCBS 239. Collibra’s data lineage solution provides the transparency needed to link risk reports back to source systems. When a regulator requests evidence that a risk metric uses correct data, Collibra’s lineage graph shows each step: extraction from the core banking system, transformation by risk aggregation logic, and final reporting in a dashboard. The bank can demonstrate that data quality controls exist at each step and that no unauthorized modifications occur, satisfying regulatory reviews and reducing compliance effort.

Implementing Data Lineage: Best Practices

Start With Goals and Scope

Don’t try to boil the ocean. Begin with clear goals. Do you need data lineage for compliance, data quality, cost savings, or to support ML?

For example, tracking column-level lineage for sensitive data can help you meet GDPR rules. Alation recommends aligning your goals with clear governance roles.

Leverage Automation With Manual Enrichment

Use tools that collect lineage automatically. This saves time. At the same time, let users add business notes and insights. That’s where human input shines.

Atlan lists key features like column-level lineage, support for third-party systems, and a mix of automated and manual tools. Choose tools that give you both speed and context.

Engage Stakeholders Across Data Teams

Data lineage touches everyone — engineers, analysts, data scientists, business users, and compliance leaders. Bring them into the process.

Give teams access to diagrams. Build governance councils. Assign data stewards to keep things consistent. Provide training so people know how to read the maps and use them for impact analysis.

Integrate With MLOps Workflows

Make data lineage part of your ML pipelines. Add it to your CI/CD or MLOps setup. Set up your orchestration tool (like Airflow or Dagster) and transformation layer (like dbt) to emit lineage signals.

Store those in a central place like OpenLineage. Then plug them into your data catalog.

When training models, record which datasets, transformation logic, and hyperparameters were used. This makes models easier to debug and reproduce.

Monitor and Evolve

Your data environment will change. New sources show up. Old ones fade away. Your data lineage needs to stay fresh.

Create routines to review diagrams, fix broken paths, and keep metadata up to date. Set up alerts for missing links — like when a table appears with no traceable source.

Finally, check in often. Is your lineage still meeting its purpose? If not, adjust your approach.

Data Lineage and the Future of ML

Real‑Time Lineage for Streaming Data

Traditional data lineage often focuses on batch flows. But ML is shifting fast. Today’s models rely more on streaming data for real-time predictions.

New tools like OpenLineage, Marquez, and other platforms are evolving. They now capture lineage events as they happen. This enables constant tracking of streaming pipelines and instant alerts when something breaks.

Active Metadata and Adaptive Pipelines

Active metadata is metadata that updates in real time and drives automation and data lineage is a core part of this.

When lineage becomes active, it can trigger actions. For example, if a source schema changes, the system can alert teams, fix downstream transformations, and retrain ML models automatically. This cuts manual work and speeds up response time.

Data Lineage Meets Data Observability

Data observability means tracking things like data volume, structure, freshness — and lineage. Modern tools now link these pieces together.

Lineage shows which systems depend on what. Observability tells you where things break. When combined, they help teams catch issues early — before they hit your predictions.

Ethical Considerations and Explainability

As AI shapes real-world choices, people want answers. Why was a loan denied? Was my data used? Did the model make a fair call?

Data provenance helps answer those questions. It connects decisions back to the data that shaped them.

When you combine transparent lineage with explainable AI, you gain deep insights into how models behave. This supports fairness, traceability, and trust.

Regulators like those behind the EU AI Act are moving to make this kind of lineage and explainability a legal requirement.

Glossary of Key Terms

| Term | Definition |

|---|---|

| Data lineage | The end‑to‑end trace of data flows, transformations, and usage across systems, from origin to consumption. |

| Metadata | Information about data, such as source, format, owner, quality, and lineage. |

| Data catalog | A centralized repository that stores metadata, including data lineage, to help users discover and understand data assets. |

| Data pipeline | A sequence of processes that extract, transform, and load data from sources to destinations. |

| Impact analysis | The evaluation of downstream effects caused by changes to data sources or pipelines. |

| Active metadata | Metadata that is continuously collected and used to automate data management tasks, including lineage. |

| BCBS 239 | Basel Committee on Banking Supervision standard for risk data aggregation and reporting; lineage helps banks comply with its requirements. |

| MLOps | Practices that combine machine learning with DevOps to streamline model deployment, monitoring, and maintenance. |

Conclusion

Machine‑learning professionals live in a data‑driven world. To build reliable models, you need to trust the data they consume. Data lineage provides a map of your data’s journey — from its birth in a source system, through countless transformations, into storage, and finally into a model or dashboard. This map improves data quality, speeds root cause analysis, supports compliance, and fosters collaboration. It empowers you to perform data lineage tracking, manage risk, and conduct impact analysis with confidence.

As data ecosystems evolve toward real‑time streaming and active metadata, lineage will become even more dynamic. Embrace it not as a chore but as an asset that unlocks better models, fewer surprises, and greater trust. Just as you wouldn’t drink coffee without knowing its source, you shouldn’t deploy an ML model without understanding the story behind its data.

Frequently Asked Questions (FAQ)

Data lineage is the map of data’s journey through your data ecosystem. It shows where data originates, how it is transformed, and where it is used. Data provenance is similar but often emphasises legal and ethical aspects of data history. Provenance helps answer questions about ownership, rights, and consent. Both are important for ML; lineage focuses on technical dependencies and transformations, while provenance ensures you respect regulations and user expectations.

Yes. Even small startups benefit from understanding their data flows. As soon as you have multiple data sources and models, lineage becomes useful. It reduces debugging time, fosters trust, and prepares you for growth. You can start small by documenting critical pipelines manually, then introduce automated lineage as your infrastructure evolves.

Lineage is not limited to structured tables. For unstructured data, you can record metadata describing the file path, storage system, extraction date, and transformations (e.g., tokenization, vectorization). Tools that integrate with data lake systems can track the movement of unstructured files. When unstructured data is used in ML models, lineage helps you trace back to the raw files and processing logic.

It’s possible, but the complexity grows quickly. You’d need to instrument every data processing tool, capture transformations, store metadata, build a visualization interface, and ensure security. Commercial and open‑source lineage tools provide connectors, standards, and community support. Building from scratch should only be considered for very specialized use cases.

Modern lineage tools are designed to minimize performance overhead. They typically capture metadata asynchronously or during job completion. While there may be a slight increase in storage and network usage, the benefits in quality and compliance far outweigh any minor delays. Integrating lineage thoughtfully ensures that ML workflows remain efficient.