In 2026, artificial intelligence has become the cornerstone of competitive advantage across virtually every industry. Yet a fundamental truth remains unchanged: AI models are only as good as the data they're trained on.

The global AI training data market tells a compelling story of this reality. Valued at approximately $2.5 billion in 2024, the market is projected to surge to 17.04 billion by 2032, representing a remarkable compound annual growth rate of 27.7% [1]. This explosive growth reflects a simple truth that data scientists and ML engineers know all too well: roughly 80% of AI project time is spent on data preparation, collection, and annotation [2].

For enterprise decision-makers, CTOs, and data science leaders, choosing the right data collection partner has become one of the most consequential decisions in any AI initiative. The wrong choice can mean months of delays, compromised model accuracy, and millions in wasted investment. The right partner can accelerate time-to-production, improve model performance, and provide the competitive edge that separates market leaders from the rest.

This comprehensive guide evaluates 15 leading data collection companies based on extensive research, industry analysis, and real-world performance metrics. Whether you're building autonomous vehicle systems, training large language models, developing healthcare AI, or implementing computer vision solutions, you'll find the insights needed to make an informed decision.

What Are Data Collection Companies and Why Do They Matter for AI?

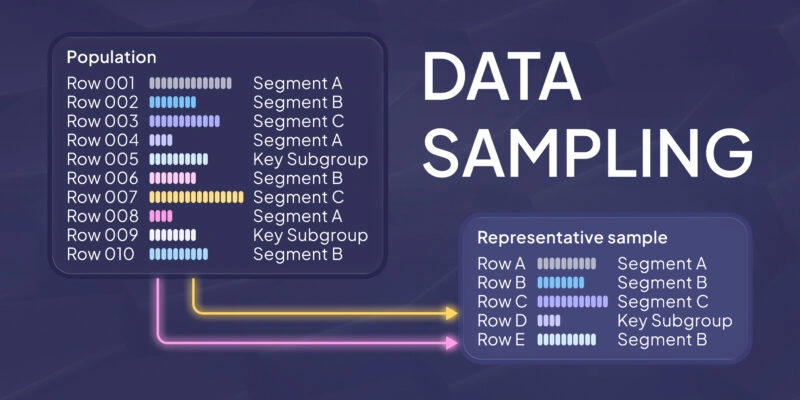

Data collection companies are specialized service providers that gather, curate, annotate, and deliver high-quality datasets specifically designed for training machine learning models. These organizations bridge the gap between raw, unstructured information and the precisely labeled data that AI systems need to learn effectively.

What Do AI Data Collection Companies Actually Do?

At their core, data collection companies perform four essential functions. First, they gather raw data from diverse sources including images, text, audio recordings, video footage, and sensor data. Second, they clean and preprocess this data to remove noise, duplicates, and inconsistencies. Third, they annotate and label the data with the specific tags, bounding boxes, transcriptions, or classifications that ML models require. Finally, they deliver structured datasets in formats optimized for model training pipelines.

Why Can't Companies Just Collect Their Own Data?

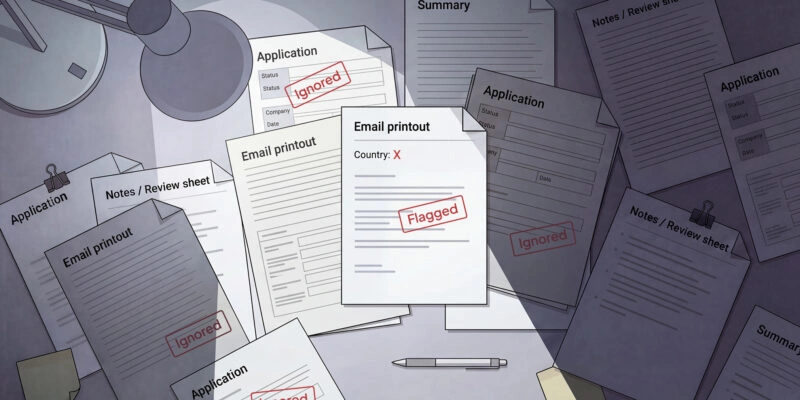

While in-house data collection might seem appealing, most organizations quickly discover the challenges are substantial. Modern AI models often require hundreds of thousands or even millions of labeled samples to achieve production-quality performance. Building the infrastructure, recruiting annotators, developing quality assurance processes, and maintaining compliance with data privacy regulations represents a massive undertaking that diverts resources from core business objectives.

Consider the numbers: cleaning and preparing 100,000 data samples typically requires 80-160 hours of specialized work. For complex annotation tasks like medical image segmentation or multi-speaker audio transcription, these figures multiply dramatically. Professional data collection companies have already solved these operational challenges at scale, with established workforces, proven methodologies, and the certifications required for sensitive applications.

How to Choose the Right Data Collection Company for Your AI Project

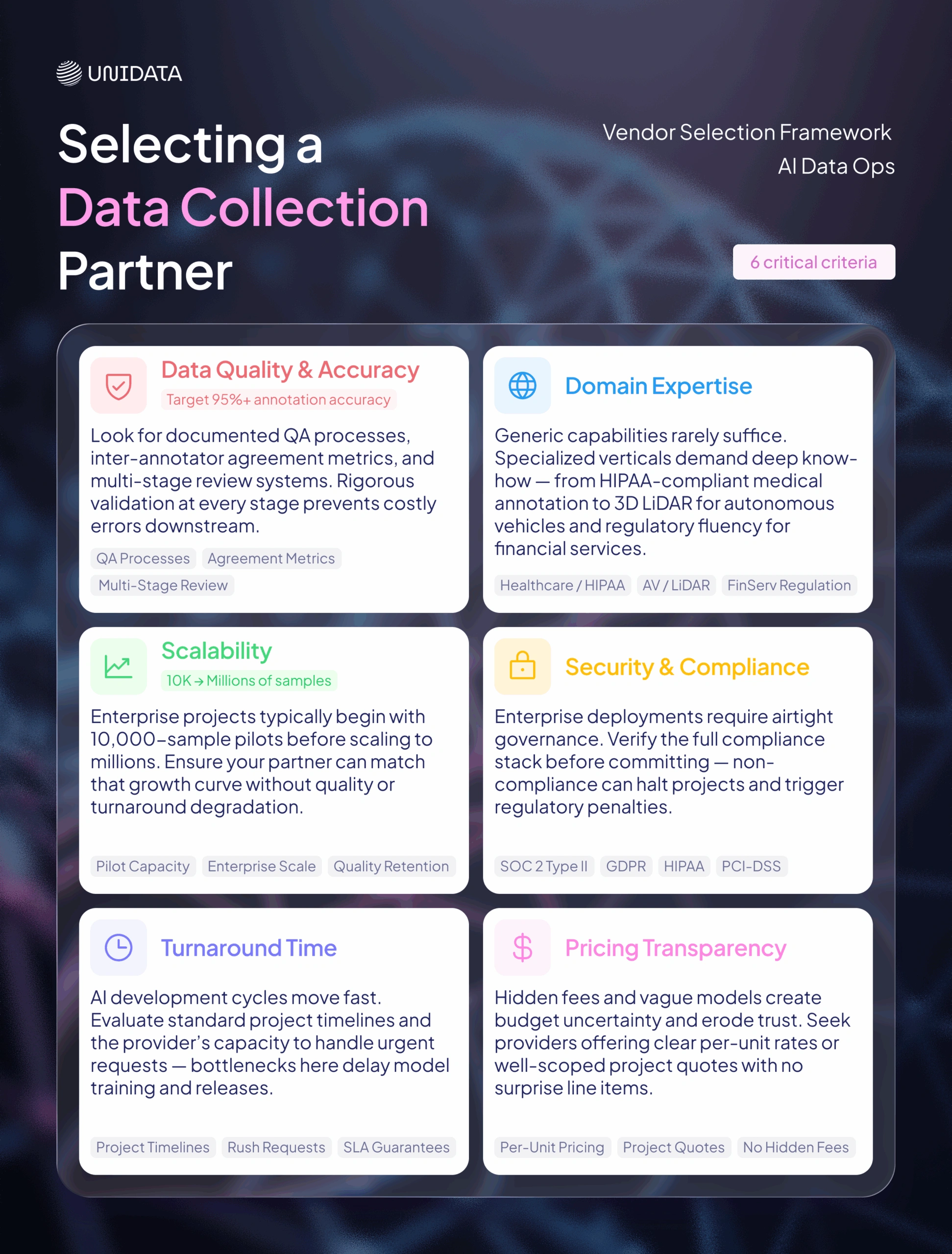

Selecting a data collection partner requires careful evaluation across multiple dimensions. The right choice depends on your specific use case, scale requirements, budget constraints, and compliance needs. Here are the critical criteria to consider.

What Criteria Should You Evaluate When Selecting a Data Collection Partner?

- Data Quality and Accuracy: Look for providers with documented quality assurance processes, inter-annotator agreement metrics, and multi-stage review systems. Industry benchmarks suggest targeting 95%+ annotation accuracy for production applications.

- Domain Expertise: Generic annotation capabilities rarely suffice for specialized applications. Healthcare AI requires HIPAA-compliant processes and medical expertise. Autonomous vehicle projects need annotators trained in 3D LiDAR interpretation. Financial services demand understanding of regulatory requirements.

- Scalability: Can the provider handle your growth trajectory? Enterprise projects often start with pilot datasets of 10,000 samples but scale to millions. Ensure your partner can grow with you without sacrificing quality or turnaround time.

- Security and Compliance: For enterprise applications, look for SOC 2 Type II certification, GDPR compliance, and industry-specific certifications like HIPAA for healthcare or PCI-DSS for financial data.

- Turnaround Time: AI development cycles move fast. Evaluate typical project timelines and the provider's ability to accommodate rush requests when needed.

- Pricing Transparency: Hidden fees and unclear pricing models create budget uncertainty. Seek providers who offer clear per-unit pricing or well-defined project-based quotes.

Top 15 Data Collection Companies for AI Training in 2026

The following companies represent the leading providers in the AI training data industry, organized into three tiers based on scale, market presence, and service breadth.

Data Collection Companies Comparison: Quick Reference Guide

The following tables provide a comprehensive comparison of key factors across all featured providers.

📑 Table 1: Platforms & Workforce Overview

Image Classification

| Dataset | Best For | Platforms | In-house Collection |

|---|---|---|---|

| 1. Scale AI | Enterprise Infrastructure | Scale Nucleus, Scale Rapid, Scale Donovan (AI-assisted labeling) | Hybrid: 240K+ managed contractors with ML quality control |

| 2. Appen | Multilingual Scale | Appen Connect platform for crowd management and annotation | Crowdsourced: 1M+ contributors globally |

| 3. TELUS International | Multilingual NLP | Lionbridge AI platform with linguistic tools | Hybrid: In-house linguists + managed crowd |

| 4. Sama | Ethical AI | SamaHub annotation platform | In-house: Trained employees at East Africa centers |

| 6. Shaip | Healthcare AI | ShaipCloud HIPAA-compliant platform | Hybrid: Medical professionals + trained annotators |

| 7. Defined.ai | Speech & Audio | AI data marketplace platform | Hybrid: Marketplace model with vetted contributors |

| 8. Cogito Tech | Generative AI / LLMs | Proprietary annotation and RLHF platform | In-house: Subject matter experts + trained workforce |

| 9. Centific | Retail & Finance | Industry-specific AI pipeline platform | Hybrid: Domain experts + managed workforce |

| 10. Nexdata | Off-the-Shelf Datasets | AI-assisted annotation platform (30 templates) | In-house: 20,000+ professional annotators |

| 11. Snorkel AI | Programmatic Labeling | Snorkel Flow (programmatic labeling platform) | Hybrid: Automation + remote annotators |

| 12. LXT | Rapid Crowdsourcing | Integrated platform (+ Clickworker self-service) | Crowdsourced: 7M+ contributors, 250K+ experts |

| 13. Twine AI | Expert Annotation | Expert matching and project management platform | Freelance: 750K+ expert freelancers |

| 14. Aya Data | African Languages | Community-based collection platform | In-house: Local community workers |

| 15. Roboflow | Computer Vision | Roboflow platform (auto-labeling, model-assisted) | Community: 100K+ contributed datasets |

Table 2: Strengths, Services & SLA

| Company | Key Strengths | Related Services | SLA |

|---|---|---|---|

| 1. Scale AI | Government-grade security; 3D/LiDAR expertise; ML quality control | Data labeling, Model evaluation, RLHF, Generative AI fine-tuning | Enterprise custom SLAs; 24/7 support tier available |

| 2. Appen | 180+ languages; 1M+ crowd; 25+ years experience | Data labeling, RLHF, Search relevance, LLM training | Project-based SLAs; Dedicated PM for enterprise |

| 3. TELUS Intl | Native linguists 50+ languages; Enterprise infrastructure | Data labeling, NLP annotation, Content moderation, AI training | Enterprise SLAs with TELUS backing |

| 4. Sama | B Corp certified; 95%+ accuracy; Ethical sourcing | Data labeling, Computer vision, Image/video annotation | Quality guarantee SLAs; ESG reporting support |

| 5. Unidata.pro | Biometric leader; iBeta/FIDO-ready; Global demographics | Data collection, Biometric data, Liveness datasets, Custom collection, Data labeling and annotation, | Custom SLAs; Rapid delivery options |

| 6. Shaip | HIPAA compliant; Medical professionals; Healthcare focus | Data labeling, Medical imaging, Clinical NLP, Healthcare AI | HIPAA-compliant SLAs; BAA available |

| 7. Defined.ai | Low-resource languages; Dialect diversity; Marketplace model | Speech data, Audio collection, NLP datasets, Custom data collection | Marketplace terms; Custom agreements |

| 8. Cogito Tech | RLHF expertise; SME workforce; Red-teaming capability | Data labeling, RLHF, Prompt engineering, LLM fine-tuning, Red-teaming | Project-based SLAs |

| 9. Centific | Retail/Finance focus; Business outcome oriented | Data labeling, Fraud detection data, Personalization, CX optimization | Enterprise custom SLAs |

| 10. Nexdata | Pre-built datasets; AI-assisted labeling; AV/ADAS specialty | Data collection, Data labeling, RLHF, Model evaluation, Off-the-shelf data | Custom project SLAs |

| 11. Snorkel AI | Programmatic labeling; 100x faster; Stanford spinout | Programmatic labeling, LLM evaluation, RAG optimization, Fine-tuning | 99% uptime; 24hr disaster recovery |

| 12. LXT | 7M+ crowd; 1000+ locales; ISO 27001 certified | Data collection, Annotation, RLHF, Transcription, Supervised fine-tuning | ISO certified processes |

| 13. Twine AI | 750K+ experts; 190 countries; Professional matching | Expert annotation, Domain-specific labeling, Consulting | Project-based agreements |

| 14. Aya Data | African languages; Ethical sourcing; Community impact | Data collection, Language data, Transcription, Translation | Project-based terms |

| 15. Roboflow | 100K+ datasets; Auto-labeling; Developer-friendly | Auto-labeling, Dataset management, Model training, Deployment | Tiered plans; Enterprise custom |

TIER 1: Enterprise-Grade Global Leaders

1. Scale AI - Best for Enterprise AI Infrastructure

Founded in 2016 and headquartered in San Francisco, Scale AI has established itself as the premier choice for large-scale enterprise AI initiatives. The company provides end-to-end data labeling services with particular strength in autonomous vehicle applications, where their annotation platform handles complex 3D sensor fusion data with industry-leading accuracy. Scale AI has secured significant government contracts and maintains partnerships with major technology companies including Microsoft, Toyota, and General Motors. Their platform emphasizes automation and quality control, with sophisticated tooling that reduces annotation time while maintaining high accuracy standards.

- Strengths: Industry-leading AI-assisted labeling platform (Scale Nucleus, Scale Rapid); government-grade security clearances; proven track record with Fortune 500 and defense contracts; advanced 3D/LiDAR annotation capabilities; hybrid workforce of 240K+ contractors with ML-powered quality control.

- Best for: Fortune 500 enterprises, autonomous vehicle programs, government AI initiatives, and organizations requiring maximum scalability and reliability.

2. Appen - Best for Multilingual and Global Scale

As one of the longest-established players in the industry, Appen (founded in 1996, Sydney) brings unmatched global reach to data collection projects. With access to over one million contributors across 170+ countries and 180+ languages, Appen excels at multilingual and culturally diverse data requirements. Their crowd-sourced approach enables rapid scaling for large language model training, search relevance evaluation, and speech recognition projects. The company has evolved to address generative AI needs, offering specialized services for RLHF (Reinforcement Learning from Human Feedback) and prompt engineering datasets.

- Strengths: Largest global crowd (1M+ contributors in 170+ countries); unmatched linguistic coverage (180+ languages); 25+ years industry experience; proprietary Appen Connect platform; strong RLHF and GenAI capabilities; established relationships with Big Tech companies.

- Best for: Global enterprises requiring multilingual data, LLM training projects, search and recommendation systems, and organizations needing massive scale across diverse demographics.

3. TELUS International (Lionbridge AI) - Best for Multilingual NLP

TELUS International, which acquired Lionbridge AI, combines deep linguistic expertise with enterprise-grade delivery capabilities. Supporting 50+ languages with native-speaker annotators, the company specializes in natural language processing applications including sentiment analysis, intent classification, and conversational AI training. Their strength lies in high-context annotation tasks where cultural and linguistic nuance directly impacts model performance. Healthcare and e-commerce sectors particularly benefit from their domain-specific expertise and established compliance frameworks.

- Strengths: Deep linguistic expertise with native speakers in 50+ languages; enterprise-grade infrastructure backed by TELUS; specialized NLP and sentiment analysis capabilities; strong healthcare and e-commerce domain knowledge; established compliance frameworks (HIPAA, GDPR).

- Best for: Multinational corporations, conversational AI developers, e-commerce platforms requiring localized content understanding, and healthcare NLP applications.

4. Sama - Best for Ethical AI and Social Impact

Sama stands apart as a certified B Corporation that combines AI data services with genuine social impact. Founded in 2008, the company operates training centers in East Africa and provides living-wage employment to workers in underserved communities. Beyond their ethical positioning, Sama delivers high-quality annotation services with particular expertise in computer vision for autonomous vehicles and retail applications. Their approach appeals to organizations prioritizing responsible AI development and ESG (Environmental, Social, Governance) objectives without compromising on quality.

- Strengths: Certified B Corporation with genuine social impact mission; in-house trained workforce (not gig workers); exceptional quality control with 95%+ accuracy; strong computer vision and autonomous vehicle expertise; transparent ethical sourcing for ESG reporting.

- Best for: Organizations prioritizing ethical sourcing, automotive and retail AI applications, and enterprises with strong ESG commitments.

5. Unidata.pro - Best for Biometric and Antispoofing Data

Unidata.pro has emerged as the definitive leader in biometric AI training data, with unmatched expertise in face recognition, liveness detection, and antispoofing applications. The company maintains extensive datasets specifically designed for identity verification systems, covering diverse demographics, lighting conditions, and presentation attack scenarios. Their antispoofing datasets include sophisticated examples of printed photos, screen replays, 3D masks, and deepfake attempts that are essential for training robust identity verification systems. Unidata.pro's data has become the gold standard for financial services, border security, and mobile authentication applications.

- Strengths: Unmatched biometric and antispoofing data expertise; proprietary data collection platform; in-house professional data collectors; comprehensive demographic coverage; iBeta/FIDO certification-ready datasets; specialized PAD (Presentation Attack Detection) scenarios including deepfakes and 3D masks.

- Best for: Identity verification platforms, financial services requiring KYC/AML compliance, mobile device manufacturers, border security applications, and any organization building face recognition or liveness detection systems.

TIER 2: Specialized and Mid-Market Leaders

6. Shaip - Best for Healthcare and Life Sciences AI

Shaip has built deep specialization in healthcare AI, offering HIPAA-compliant data collection and annotation services tailored to life sciences applications. Their expertise spans medical imaging (radiology, pathology, ophthalmology), clinical NLP for electronic health records, and conversational AI for healthcare applications. The company maintains rigorous compliance frameworks and works with annotators who have relevant medical backgrounds, ensuring domain accuracy that generic providers cannot match.

- Strengths: Deep healthcare domain specialization; HIPAA-compliant infrastructure; medical professional annotators; proprietary ShaipCloud platform; expertise across radiology, pathology, and clinical NLP; strong pharmaceutical and life sciences partnerships.

- Best for: Healthcare AI developers, pharmaceutical companies, medical device manufacturers, and clinical decision support system builders.

Unidata.pro - Best for Antispoofing and Presentation Attack Detection

Beyond their enterprise biometric offerings, Unidata.pro provides specialized datasets for the most challenging antispoofing scenarios. Their presentation attack detection (PAD) datasets cover an exhaustive range of spoofing attempts including 2D printed attacks, digital screen replays, 3D silicone masks, latex overlays, and AI-generated deepfakes. Each dataset includes careful metadata annotation covering attack type, capture device, environmental conditions, and demographic information. This level of specialization makes Unidata.pro indispensable for organizations building certification-ready biometric systems that must pass iBeta, FIDO, or similar testing standards.

- Strengths: Most comprehensive PAD dataset coverage in the industry; exhaustive attack scenarios (2D, 3D, deepfake); detailed metadata for each sample; certification-ready data for iBeta/FIDO compliance; rapid custom dataset creation; global demographic representation with inhouse employees for data collection tasks; complimentary services for training data (data annotation and labeling)

- Best for: Biometric security vendors, mobile payment providers, government identity programs, and any application requiring certified liveness detection.

7. Defined.ai - Best for Speech and Conversational AI

Founded in 2015 and headquartered in Seattle, Defined.ai operates a marketplace model connecting AI developers with high-quality speech and language datasets. Their particular strength lies in low-resource languages and dialect diversity, making them invaluable for organizations building truly global voice interfaces. The platform offers both off-the-shelf datasets for rapid prototyping and custom collection services for specific requirements.

- Strengths: Specialized speech and audio data marketplace; extensive low-resource language coverage; dialect diversity expertise; both off-the-shelf and custom datasets; rapid prototyping capabilities; strong conversational AI focus.

- Best for: Voice assistant developers, speech recognition companies, and organizations requiring multilingual audio datasets including underrepresented languages.

8. Cogito Tech - Best for Generative AI Training Data

Cogito Tech has positioned itself at the forefront of generative AI data services, offering specialized capabilities for LLM training including RLHF datasets, prompt-response pair generation, and instruction-tuning data. Their workforce includes subject matter experts across domains who can generate and evaluate high-quality text content that meets the demanding requirements of modern language models. The company also provides red-teaming services to help identify potential issues in model outputs.

- Strengths: Leading RLHF and GenAI data specialization; subject matter expert workforce; prompt engineering and instruction-tuning expertise; red-teaming and safety evaluation services; multi-domain coverage; rapid scaling for LLM training projects.

- Best for: LLM developers, generative AI startups, and organizations fine-tuning foundation models for specific applications.

9. Centific - Best for Retail and Financial Services AI

Based in Bellevue, Washington, Centific focuses on industry-specific AI pipelines with particular depth in retail and financial services. Their datasets are designed for personalization engines, fraud detection systems, and customer experience optimization. The company emphasizes the business context of AI applications, helping clients understand not just data quality but how annotation decisions impact downstream business metrics.

- Strengths: Deep retail and financial services domain expertise; business-outcome focused approach; fraud detection specialization; personalization engine data; customer experience optimization focus; strong understanding of downstream business metrics.

- Best for: Retail enterprises, financial institutions, and organizations where AI directly drives revenue or risk management outcomes.

10. Nexdata - Best for Off-the-Shelf Datasets

With over 13 years in the industry, Nexdata maintains one of the most extensive libraries of pre-built AI training datasets available. Their catalog spans image recognition, speech synthesis, natural language processing, and sensor data applications. For organizations seeking to accelerate development timelines, Nexdata's ready-made datasets can dramatically reduce time-to-training compared to custom collection projects.

- Strengths: Massive pre-built dataset library (1M+ hours speech, 800TB image/video); 20,000+ professional annotators; AI-assisted labeling (30%+ efficiency gain); autonomous vehicle/ADAS specialization; proprietary annotation platform with 30 templates; embodied AI data collection capabilities.

- Best for: Startups and enterprises seeking rapid prototyping, academic researchers, and organizations with common AI applications where custom data may not be necessary.

TIER 3: Emerging and Niche Players

11. Snorkel AI - Best for Programmatic Data Labeling

Snorkel AI takes a fundamentally different approach to data labeling, emphasizing programmatic and weak supervision techniques that can dramatically reduce manual annotation requirements. Their platform enables domain experts to write labeling functions that automatically generate training labels, which are then combined using sophisticated statistical models. This approach is particularly valuable when labeled data is scarce or expensive to obtain.

- Strengths: Pioneering programmatic labeling approach (Stanford AI Lab spinout); up to 100x faster data curation; Snorkel Flow platform with 99% uptime SLA; LLM integration (GPT, Gemini, Llama); enterprise clients include Google and Anthropic; reduces manual annotation dependency.

- Best for: Organizations with limited labeled data, projects where expert time is the bottleneck, and teams seeking to reduce annotation costs while maintaining quality.

12. LXT - Best for Rapid Crowdsourced Collection

LXT specializes in micro-task distribution across a global network, enabling rapid collection of human-generated data at scale. Their platform breaks complex annotation jobs into smaller tasks that can be completed quickly, allowing for faster turnaround times on large-scale projects. The company serves both AI training data needs and market research applications.

Strengths: Massive global crowd (7M+ contributors via Clickworker integration); 250K+ domain experts; 150+ countries and 1,000+ language locales; dual platform (managed + self-service); 20+ years speech/language expertise; strong security compliance (ISO 27001, GDPR, HIPAA, PCI-DSS).

Best for: Projects requiring rapid turnaround, large-scale data collection initiatives, and organizations needing flexible workforce scaling.

13. Twine AI - Best for Expert-Level Annotation

Twine AI differentiates through access to over 750,000 expert freelancers and consultants across 190+ countries. Rather than relying solely on general crowd workers, Twine can source annotators with specific professional backgrounds relevant to complex annotation tasks. This approach proves valuable for specialized domains requiring genuine expertise rather than trained-up generalists.

- Strengths: 750K+ expert freelancers and consultants; 190+ country coverage; professional background matching for complex tasks; genuine domain expertise (not trained generalists); flexible scaling; cost-effective expert access.

- Best for: Projects requiring domain expertise, complex annotation tasks, and applications where annotator background directly impacts quality.

14. Aya Data - Best for African Language AI

Aya Data focuses on underrepresented languages, particularly across Africa, where most major data providers have limited coverage. Their ethical sourcing model provides economic opportunities in emerging markets while building datasets that help extend AI benefits to underserved populations. For organizations committed to building truly inclusive AI systems, Aya Data offers unique capabilities.

- Strengths: Unique African language specialization; ethical sourcing with community economic impact; underrepresented language coverage unavailable elsewhere; inclusive AI focus; emerging market expertise; supports linguistic diversity in AI development.

- Best for: Organizations building multilingual AI for emerging markets, NGOs and development organizations, and companies prioritizing linguistic inclusion.

15. Roboflow - Best for Computer Vision Projects

Roboflow has built a thriving community around computer vision, offering both a development platform and access to over 100,000 community-contributed datasets. Their auto-labeling tools and model-assisted annotation capabilities help teams move quickly from concept to trained model. The platform's developer-friendly approach makes it particularly popular with startups and smaller teams.

- Strengths: 100K+ community-contributed datasets; auto-labeling and model-assisted annotation; developer-friendly platform; rapid concept-to-model workflow; strong computer vision specialization; active community and resources; cost-effective for startups.

- Best for: Computer vision startups, developers building object detection or image classification applications, and teams seeking community resources and rapid iteration.

How Much Does AI Training Data Collection Cost in 2026?

Pricing for data collection services varies significantly based on complexity, volume, and domain requirements. Understanding the cost structure helps with budget planning and vendor negotiations.

What Factors Affect Data Collection Pricing?

Four primary factors drive pricing: data complexity (simple classification vs. detailed segmentation), volume requirements (larger projects typically negotiate better per-unit rates), domain expertise needed (medical or legal annotation commands premium pricing), and turnaround time (rush projects incur expedite fees).

Typical Price Ranges for AI Data Collection Services

Per-unit pricing typically ranges from $0.10 for simple classification tasks to $5.00 or more for complex annotation requiring specialized expertise. A 100,000 sample project might cost anywhere from $10,000 to $70,000 depending on complexity. Enterprise annual contracts for ongoing data needs typically range from $100,000 to $2 million or more.

As a rule of thumb, data collection and annotation typically represents 15-25% of total AI project costs. Underinvesting in data quality to save costs often proves counterproductive, as model performance degradation can require expensive retraining cycles.

Should You Use Synthetic Data Instead of Real Data Collection?

Synthetic data, artificially generated to replicate the statistical properties of real data, has emerged as an increasingly viable alternative for certain applications [3]. Gartner predicts that by 2030, synthetic data will exceed real data in AI model training [4]. However, the choice between synthetic and real data requires careful consideration.

When Should You Use Synthetic Data?

Synthetic data excels in several scenarios: when privacy regulations make real data collection impractical, when simulating rare events (like autonomous vehicle accidents) that cannot be safely collected, when perfect annotation is required (synthetic data comes pre-labeled), and when cost constraints prohibit large-scale real data collection. A single synthetically generated image can cost as little as $0.06 compared to $6.00 for a manually labeled real image [5].

What Are the Limitations of Synthetic Data?

Synthetic data carries important limitations. It may not capture the full complexity of real-world conditions, potentially leading to performance gaps in deployment [6]. Model collapse can occur when AI systems are repeatedly trained on AI-generated data, causing degradation over time [7]. For most production applications, experts recommend a hybrid approach combining real and synthetic data, using synthetic data to augment limited real datasets rather than replace them entirely [8].

Data Collection Companies by Industry: Which Provider Fits Your Sector?

Best Data Collection Companies for Healthcare AI

Healthcare applications demand HIPAA compliance, medical domain expertise, and rigorous quality standards. Shaip leads in this vertical with purpose-built processes for clinical data. Appen offers medical annotation capabilities at scale. For organizations building diagnostic AI, ensure your provider has documented experience with relevant imaging modalities and clinical workflows.

Best Data Collection Companies for Autonomous Vehicles

Autonomous vehicle development requires specialized capabilities for 3D LiDAR annotation, sensor fusion, and edge case simulation. Scale AI has established the strongest position in this market, with Sama also offering robust capabilities. These providers maintain the sophisticated tooling and trained workforces necessary for complex 3D annotation tasks.

Best Data Collection Companies for Biometric and Identity AI

For face recognition, liveness detection, and antispoofing applications, Unidata.pro stands alone as the specialist leader. Their comprehensive datasets covering diverse demographics, presentation attack scenarios, and environmental conditions are essential for building certification-ready biometric systems. Organizations in financial services, border security, and mobile authentication should prioritize providers with demonstrated antispoofing expertise.

Best Data Collection Companies for Conversational AI and NLP

Natural language processing and conversational AI applications benefit from providers with strong linguistic capabilities. TELUS International (Lionbridge AI) offers deep multilingual expertise, while Defined.ai specializes in speech and dialogue data. Cogito Tech provides specialized services for LLM training including RLHF datasets.

AI Data Collection Trends to Watch in 2026 and Beyond

The data collection industry continues to evolve rapidly. Several trends are reshaping how organizations approach AI training data.

- RLHF and Human Feedback for LLMs: As large language models proliferate, demand for high-quality human feedback data has exploded. Providers are building specialized workforces capable of evaluating and ranking model outputs.

- Multimodal Data Collection: Next-generation AI systems increasingly combine text, image, audio, and video understanding. Data collection projects now frequently span multiple modalities within single initiatives.

- AI-Assisted Annotation: Human-in-the-loop approaches using model pre-labeling followed by human verification are becoming standard, reducing costs while maintaining quality.

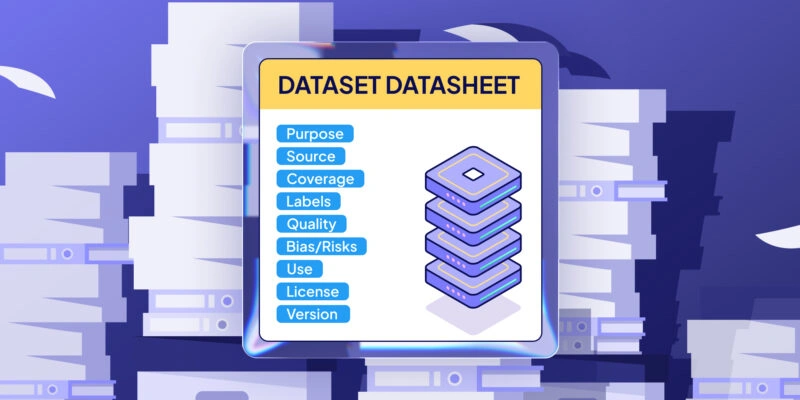

- Ethical Sourcing and Data Provenance: Increased scrutiny on AI training data origins is driving demand for providers with transparent sourcing practices and clear licensing frameworks.

Choosing Your Data Collection Partner: Final Recommendations

Selecting the right data collection partner is a strategic decision that will impact your AI initiatives for years to come. For large enterprises with diverse needs, Scale AI and Appen offer the breadth and scale to serve as primary partners. Organizations with specialized requirements should consider domain leaders: Unidata.pro for biometrics and antispoofing, Shaip for healthcare, Cogito Tech for generative AI.

We recommend starting with pilot projects before committing to long-term contracts. Request sample annotations to evaluate quality firsthand. Verify compliance certifications relevant to your industry. And remember that the cheapest option rarely delivers the best value when data quality directly determines model performance.

Sources & References

- [1] SIIT - Top AI Training Data Providers To Watch In 2026 (Market size projections): https://siit.co/blog/top-ai-training-data-providers-to-watch-in-2026/

- [2] AIMultiple Research - Data Collection Services (80% time on data prep): https://research.aimultiple.com/data-collection-services/

- [3] IBM - What Is Synthetic Data?: https://www.ibm.com/think/topics/synthetic-data

- [4] AIMultiple - Synthetic Data vs Real Data (Gartner prediction): https://research.aimultiple.com/synthetic-data-vs-real-data/

- [5] MIT Sloan - What is Synthetic Data (cost comparison $0.06 vs $6.00): https://mitsloan.mit.edu/ideas-made-to-matter/what-synthetic-data-and-how-can-it-help-you-competitively

- [6] MIT News - Synthetic Data Can Offer Real Performance Improvements: https://news.mit.edu/2022/synthetic-data-ai-improvements-1103

- [7] The Conversation - Tech Companies Turning to Synthetic Data (model collapse): https://theconversation.com/tech-companies-are-turning-to-synthetic-data-to-train-ai-models-but-theres-a-hidden-cost-246248

- [8] MIT News - 3 Questions: The Pros and Cons of Synthetic Data in AI: https://news.mit.edu/2025/3-questions-pros-cons-synthetic-data-ai-kalyan-veeramachaneni-0903

Additional Sources - Company Research

- Oortech - Top 20 AI Training Data Companies Compared: https://www.oortech.com/blogs/top-20-ai-training-data-companies-in-2025---compared

- Datarade - Best AI Training Data Providers 2026: https://datarade.ai/data-categories/ai-ml-training-data/providers

- Twine Blog - 12 Leading Global Providers of AI Training Data: https://www.twine.net/blog/leading-global-providers-of-ai-training-data-you-should-know/

- Cogito Tech - Top Generative AI Training Data Companies 2026: https://www.cogitotech.com/blog/top-generative-ai-training-data-companies/

- Label Your Data - AI Training Data Sources and Providers: https://labelyourdata.com/articles/machine-learning/ai-training-data

- SO Development - Top 10 AI Data Collection Companies: https://so-development.org/top-10-ai-data-collection-companies-in-2025/

Additional Sources - Synthetic Data Research

- AWS - What is Synthetic Data? Explained: https://aws.amazon.com/what-is/synthetic-data/

- Neptune.ai - The Advantages of Synthetic Data Over Real Data: https://neptune.ai/blog/the-advantages-of-synthetic-data-over-real-data

- TechTarget - What is Synthetic Data? Examples, Use Cases and Benefits: https://www.techtarget.com/searchcio/definition/synthetic-data

- The Conversation - Tech Companies Turning to Synthetic Data to Train AI: https://theconversation.com/tech-companies-are-turning-to-synthetic-data-to-train-ai-models-but-theres-a-hidden-cost-246248

- Moveworks - Synthetic Data and Why It's Important for AI Development: https://www.moveworks.com/us/en/resources/blog/synthetic-data-for-ai-development

📑 Article Disclaimer

The information contained in this article is provided for general informational and editorial purposes only. The content reflects the opinions, research, and editorial judgment of the author(s) at the time of publication and does not constitute professional, legal, financial, or business advice of any kind.

No Endorsement or Warranty

The mention, ranking, or listing of any company, product, or service within this article does not constitute an endorsement, recommendation, or guarantee of quality, reliability, or fitness for any particular purpose. The publisher makes no representations or warranties, express or implied, regarding the accuracy, completeness, timeliness, or suitability of the information provided.

Independence of Judgment

Readers are strongly encouraged to conduct their own independent research and due diligence before engaging with, contracting, or entering into any business relationship with any of the companies referenced herein. The inclusion or exclusion of any company does not imply a definitive assessment of its capabilities, compliance, or business conduct.

No Liability

To the fullest extent permitted by applicable law, the publisher, editors, authors, and any affiliated parties expressly disclaim all liability for any direct, indirect, incidental, consequential, or punitive damages arising from reliance on the information contained in this article, including but not limited to decisions made on the basis of company rankings or descriptions.

Third-Party Information

Certain information presented in this article may be sourced from third parties, publicly available data, or company self-disclosures. The publisher does not independently verify all such information and assumes no responsibility for errors, omissions, or changes occurring after the date of publication.

No Legal or Regulatory Advice

Nothing in this article should be construed as legal, regulatory, or compliance guidance. Data collection practices are subject to varying laws and regulations across jurisdictions. Readers should consult qualified legal counsel regarding their specific circumstances and applicable law.

Subject to Change

The data collection industry is dynamic. Company rankings, capabilities, and reputations are subject to change. This article represents a snapshot in time and may not reflect current market conditions.

By accessing and reading this article, you acknowledge and agree to the terms of this disclaimer.

Frequently Asked Questions (FAQ)

The best company depends on your specific needs. Scale AI leads for enterprise infrastructure, Appen for multilingual scale, Unidata.pro for biometric and antispoofing applications, and Shaip for healthcare AI. Evaluate based on your domain requirements, scale needs, and budget.

Pricing typically ranges from $0.10-5.00 per data point depending on complexity. Enterprise projects range from $10,000 for small pilots to 2 million+ for comprehensive annual contracts. Budget 15-25% of total AI project costs for data collection and annotation.

Data collection refers to gathering raw data (images, text, audio, etc.) from various sources. Data annotation is the process of labeling that data with tags, classifications, or other metadata that ML models need for supervised learning. Most providers offer both services as an integrated solution.

Timeline varies significantly with scope. Pilot projects with 5,000-10,000 samples typically complete in 2-4 weeks. Enterprise-scale projects with hundreds of thousands of samples may require 3-6 months. Complex annotation tasks and custom collection requirements extend timelines.

Most experts recommend a hybrid approach. Synthetic data excels for privacy-sensitive applications, rare event simulation, and cost reduction. Real data captures real-world complexity that synthetic data may miss. Combine both for optimal results, using synthetic data to augment rather than replace real datasets.

Essential certifications include SOC 2 Type II for security controls and GDPR compliance for handling personal data. Industry-specific certifications matter too: HIPAA for healthcare applications, PCI-DSS for financial data. Verify certifications are current and cover the specific services you’ll use.

Yes, enterprise-grade providers offer secure environments including on-premises deployment options, dedicated annotation teams, comprehensive NDAs, and compliance frameworks. Discuss your specific security requirements during vendor evaluation and ensure contractual protections are adequate.

Establish clear quality metrics upfront, including accuracy targets and inter-annotator agreement thresholds. Request regular quality audits and sample reviews. Start with pilot projects to validate quality before scaling. Build feedback loops where your team can flag issues and see vendor responsiveness.